Inter-Rater Reliability Essentials: Practical Guide In R: Kassambara, Alboukadel: 9781707287567: Amazon.com: Books

Correlation Kappa Coefficient of the categorical data and the p value... | Download Scientific Diagram

Correlation Kappa Coefficient of the categorical data and the p value... | Download Scientific Diagram

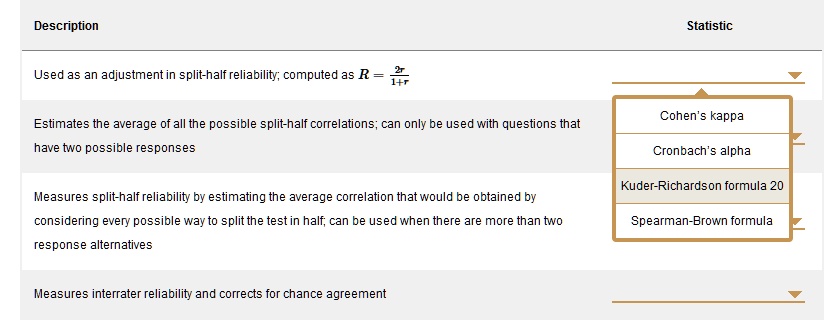

SOLVED: Description Statistic Used as an adjustment in split-half reliability; computed as R = # Cohen's kappa Estimates the average of all the possible split-half correlations can only be used with questions

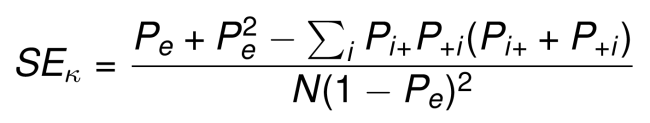

Kappa Statistic is not Satisfactory for Assessing the Extent of Agreement Between Raters | Semantic Scholar

![PDF] 1 . 3 Agreement Statistics TUTORIAL IN BIOSTATISTICS Kappa coe cients in medical research | Semantic Scholar PDF] 1 . 3 Agreement Statistics TUTORIAL IN BIOSTATISTICS Kappa coe cients in medical research | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/2594de0bb525f84b956e8b2416b6113f7d125348/11-TableIII-1.png)